Entrepreneurship tools for learning-focussed team processes

Learning-focussed environments

We have written a fair bit about how we use experiments to drive insight. A learning-focussed approach to our work allows us to ask questions around effectiveness, and overall has lead to a very intentional approach to running programmes, workshops or other experiments (like social media ads, blog posts etc). Applying an experiment mindset to our work means we ask ourselves: what are we hoping to learn? What do we think will happen? What are we hoping to find out more about?

Using an experiment setup means action-research initiatives like Lifehack can build our own evidence base, permitting that the experiments are set up correctly (more on that later). It definitely takes practice—but can help create clarity in complex settings when there’s little else to hold on to. Given our work at the intersection of design, wellbeing science, technology and entrepreneurship, much of the work we were embarking on hadn’t been done before: what happens when you cross wellbeing work with entrepreneurship tools? How does human-centered design align with wellbeing work?

Experiment Sheets

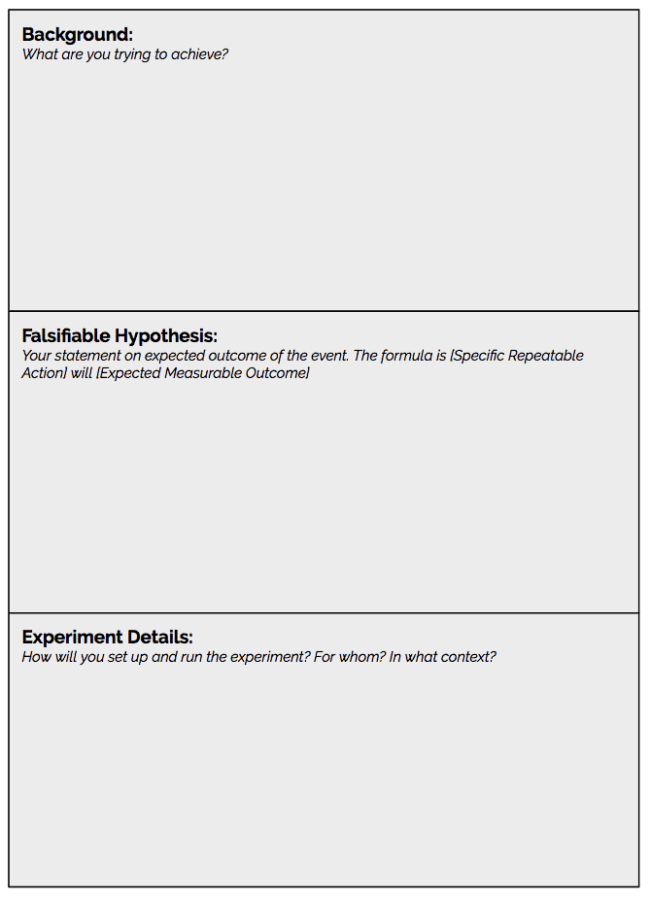

In our early days, we documented most of our work in experiment format. Here’s a link to a sample of an experiment sheet. The left half of the sheet documents the experiment set-up, prior to running the experiment. The box on the top left states the background to the experiment: what is it that you’re trying to achieve? This is followed by the hypothesis. This can be one of the most challenging parts. The idea is to state the expected outcome of the event. Ideally it will follow the formula of [Specific Repeatable Action] will result in [Expected Measurable Outcome]. I often find it easy to start thinking about this in an area where results are easier to track, eg digital engagement. So for example [Posting a cat GIF once per day on Twitter] will result in [an increase of 15% of Twitter followers week on week].

In the experiment details section it’s then about writing exactly how the experiment might run. What time, who’s involved, what type of content will be posted etc. It’s also useful to state how long the experiment will run for. The shorter the time frame, the faster you’ll get to review the outcomes—for us at Lifehack we looked at experiments that happened within a programme or event, with the view to reviewing outcomes straight after (where possible) to about six months down the track.

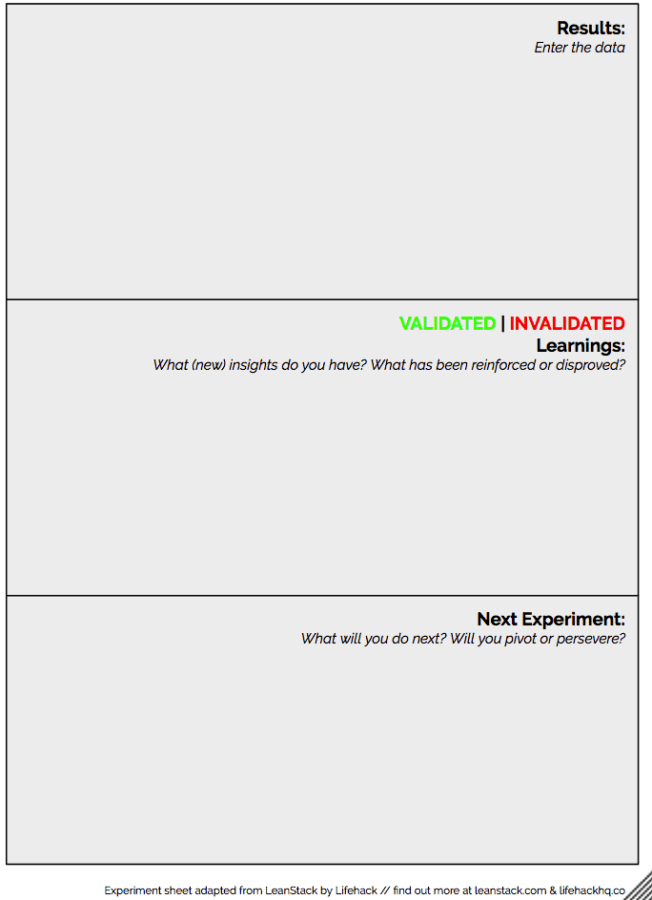

The right side of the template is then filled in once the experiment has run. It documents what happened, what the results specific to the hypothesis are, and what could be done as a next experiment.

For our mahi, we have written up experiment sheets on anything from running a residential and non-residential hui (and then comparing the results of group cohesiveness later down the line), self-organised lunch catering (to work out whether having participants sort out lunches for the cohort added to cohesion or wasn’t worth the additional labour), having young people in a cohort (will adult participants state that they have a newfound acknowledgement for young people’s contribution?), giving conference talks (what interactions happened as a result of what we said). As you can imagine, the ‘non-digital’ experiments get a lot messier than tracking followers on Twitter. As soon as there are humans involved, it becomes complex and often there is more than one factor (naturally) that might influence the behaviour. The experiment canvas is by no means a perfect approach, but it certainly helps create a clarity of thinking, intentionality and an openness to observe and reflect on people’s experiences.

Lean Startup

This experiment set up above derives from the world of Lean Startup. Wikipedia tells us that “Lean startup is a methodology for developing businesses and products. The methodology aims to shorten product development cycles by adopting a combination of business-hypothesis-driven experimentation, iterative product releases, and validated learning. The central hypothesis of the lean startup methodology is that if startup companies invest their time into iteratively building products or services to meet the needs of early customers, they can reduce the market risks and sidestep the need for large amounts of initial project funding and expensive product launches and failures.”

There’s a ton of resources out in the world that might help people set up their own experiments.

Tracking experiments in Trello

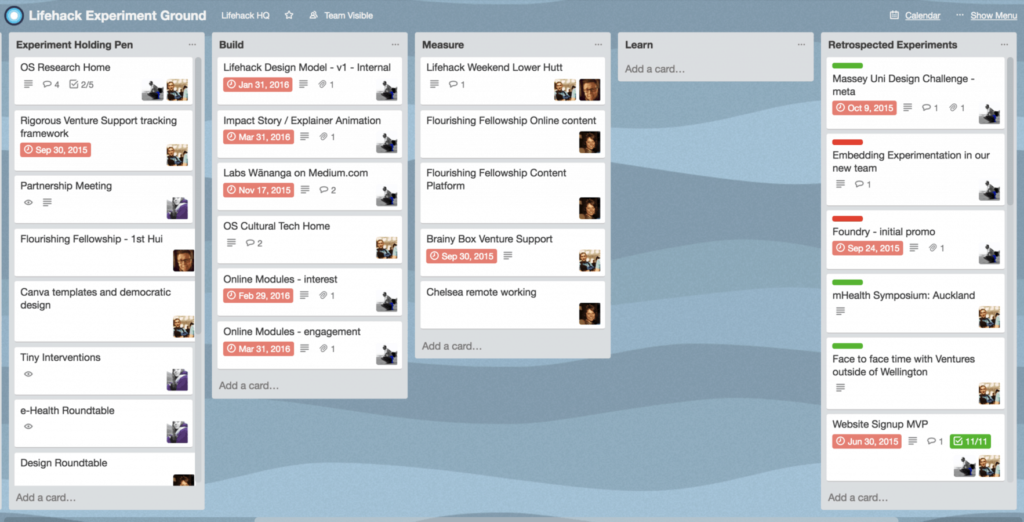

Task-management tools like Trello can help us keep track of the experiments that are currently in play. Trello is essentially an online collaborative to-do list and can be modified to have any headings or content. It can be used for project management or household shopping—anything is possible.

As a possible setup for tracking experiments it could have the rows of Next Up, In Progress, In Review, Archived. Under each header you could then post a so-called ticket with the name of the experiment (eg ‘swapping espresso for decaf’) and attach the experiment sheet (or a link to the document). Trello is one of the products from the tech world that we have found improves our working lives (and those of many we have worked with). There’s a free version available.

Blending entrepreneurship and wellbeing

In our world of sitting at the intersection of a few different worlds, we came to realise that not all tools are easily transferrable from one world to the next. For example, the experiment process and Lean Startup methodology might work to a certain extent. But operating in a wellbeing-centric world, we realised that additional considerations needed to be integrated into the running of experiments in a youth and/or wellbeing context. The sentiment of ‘get out the building and talk to people’, which is often promoted in the entrepreneurship world in particular when it comes to validating assumptions, isn’t as straightforward in a health context. Imagine someone working on a project for people acutely suffering from depression, talking to a stranger on the street about their experience with depression, asking a bunch of questions (perhaps not even catching a name) and then walking off, never to interact with that person again. How do we know the person wasn’t badly triggered through the questions? Do they have the right level of support and care around them? Did they consent to the interaction, topic and way of inquiry? Those are just some of the questions needing to be considered when working on sensitive topics. Over time, we’ve come up with a few questions to help guide our interactions with other people when it comes to the topics of quality, safety, ethics and rigour in a codesign environment. The terms we use are

- Responsibility

- Reciprocity

- Expertise

- Accessibility

Responsibility:

What are your responsibilities to yourself, the people you serve and to your team and/or organisation?

Reciprocity:

What is the exchange – is it knowledge, service, power or something else?

What are the mutual benefits?

Expertise:

Who is considered the “expert” in this community, project, initiative or organisation?

Accessibility:

What does it take to make what you do accessible?

What does accessibility mean in your context?

These rough guidelines can help us set up better experiments (read: safer, more ethical, of higher quality and with more rigour applied). They are principles to apply in any situation where we interact with others, but most definitely when it comes to experiment thinking, design (participatory, human-centered, co-design) and applying entrepreneurship tools in the world of health and wellbeing.

Keep your eyes peeled for future blog posts on the intersection of ethics and wellbeing design.