Enabling Youth Wellbeing: Developing a Lifehack Impact Model

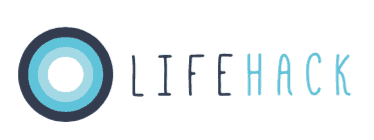

Over several months at the end of 2016 we developed a comprehensive impact model for Lifehack. It helps consolidate and share what we’ve learnt between 2013 and 2016 about how an innovation initiative like Lifehack creates and tracks impact. The Impact Model makes explicit the outcomes and kinds of impact we seek to create and provides examples of how we have done that so far. It also articulates the connections between our activities and what is known about the systemic barriers and goals relevant to increasing youth wellbeing. This post provides a little background as to why and how we developed the model. The Impact Model and accompanying report are now available via our Lifehack resources page. You can also see the animated version in a very user friendly animation on our website, thanks to 2016 Flourishing Fellow, Christel.

Why did we need an Impact Model?

In 2013, Enspiral took over the Lifehack contract. A key aspect of Enspiral’s approach was to reframe Lifehack away from “addressing mental health problems” to a strengths-based model focused on flourishing and promoting the protective factors for youth mental health and wellbeing. From the 2015 Lifehack Report:

We realised the need for a different and diverse approach to the issues surrounding youth mental health in Aotearoa New Zealand. Our approach is to engage young people and those that work with them to lead and/or be involved in developing evidence-based interventions which improve youth wellbeing….A flourishing society requires investment in young people that focuses not only on minimising deficits and treating issues, but on building capability and skills that will enable rangatahi (young people) to be healthy, resilient and well-prepared for their lives despite the inevitable ups and downs.

Methods to evaluate the impact of innovation platforms like Lifehack are still emerging internationally. The challenges to evaluating impact for primary prevention activities are well acknowledged. We were never designed to be a service provider, — so counting how many young people we’d engaged with wouldn’t necessarily accurately reflect impact. Rather, our goal was to learn how best to build capability across the system that would have a collective impact on youth wellbeing.

Experimentation and learning loops have always been at the heart of Lifehack’s structure and our programme content. This commitment to reflection and evaluation was inherited from the human-centred design, startup and social enterprise culture within Enspiral (see for example our tools and blogs on experimentation “Running Lean Start Experiments” and “How We Use Experiments To Drive Insight” ). As a demonstration of this, between 2013 and 2016 our team of four deployed more than 100 surveys and polls and produced 16 reports as well as many blog posts reflecting on our programme successes and failures.

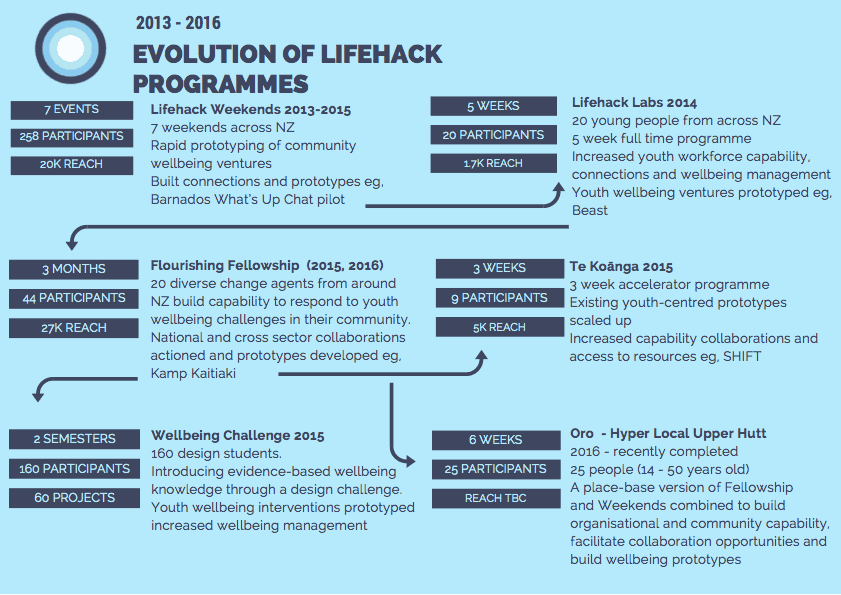

We’ve documented how these programme changes have had positive impacts for our participants and fellows (for example see the Fellowship videos 2015 and 2016 and Impact Story and Massey Wellbeing Challenge report) and tested out differents ways to track impact (for example using the different Capitals https://lifehackhq.co/impact-evaluation/ and https://lifehackhq.co/introducing-sixth-capital-wellbeing/ ). We have consistently co-designed and iterated our programmes, evolving our focus and interventions in response to what we’ve learned (see for example Lifehack Programme Evolution in Figure 2). We’ve also been committed to sharing these learnings as widely as possible (see for example our “Lifehack Codesign Guide”).

Evolution of key Lifehack programmes driven by evaluation and reflection on programme outcomes, strengths, weaknesses and successes (not an exhaustive list).

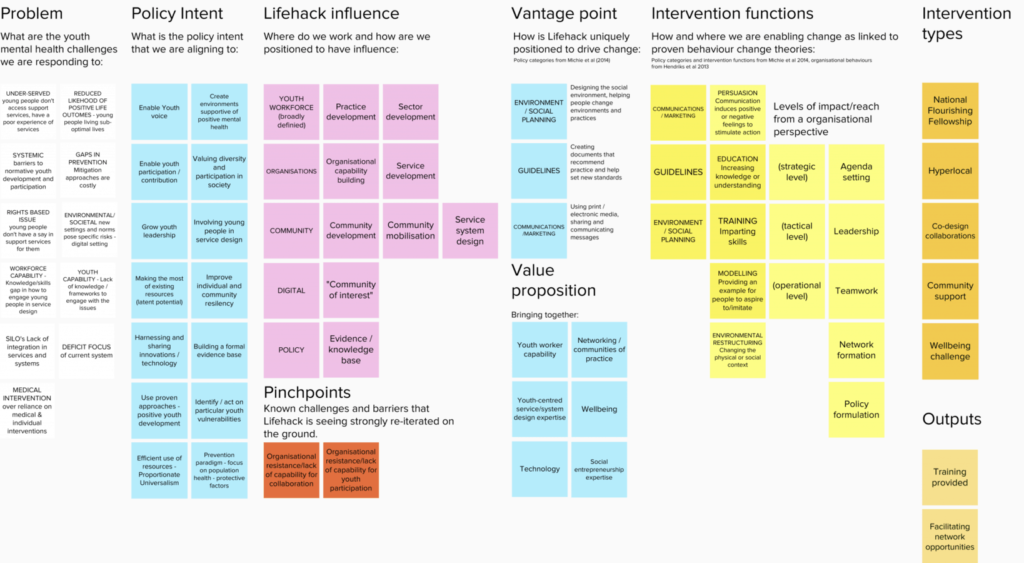

However as the Lifehack approach evolved, we needed to close the gap between the initial expectations for the initiative (which focused on apps and social media) and the strategic direction and learning about youth wellbeing and ways to create impact that had emerged over our time of running experiments. We needed a way to identify and track appropriate outcomes at different levels (e.g., young people, programme participants, organisations, communities) and connect the prevention paradigm effectively to policy priorities.

We had been concerted in our efforts to report impact on participants, but needed to do better at assessing the impact of the programmes on youth wellbeing more broadly. Whilst we had evidence to support the results of the smaller programmatic experiments, we needed a framework and process for assessing our impact at a bigger level. Was our approach to creating systems change working? Were the programmes or actions delivered by our partners collectively making change? Were we having the needed ripple effects across the system, and into the lives of young people? Indeed, were we achieving the necessary longer term outcomes that would get us towards our goal of all young people flourishing in 2050, and if so how?

In 2016 we undertook two programmes of work to address this gap in articulating and documenting our practice and impact. One included a partnership with impact-programme evaluation startup Thicket Labs to trial a specific approach to evaluating the 2016 Fellowship programme. The other was to work with Geoff Stone of Ripple Collective to retrospectively understand and articulate Lifehack’s broader impact. This process included enhancing and standardising our approach to evaluation overall, and sharpening our attention to who we were working with, why and to what end. As a result of this process we developed the Impact Model, and an initial set of data driven impact stories that show how the model works in practice, and provides evidence for it.

The Impact Model helps articulate Lifehack’s approach and the changes we intend to produce within a broader policy framework. It also provides an example to others working in the social innovation and social labs space of our we might account for varied, systems-level impacts.

How we developed and tested the model and Lifehack’s capacity to create impact

The model was developed by simultaneously doing two things. Making explicit Lifehack’s intended outcomes i.e., mapping out the change we expected to see as a result of our interventions using standard and proven behaviour change categories and concepts. At the same time we compared these to the data we were gathering about actual impact from past programme participants collected through interviews and other channels. We used the data, developed into specifically structured Impact Stories, to check that there had actually been sustainable impact and also to confirm/refine the categories in which Lifehack could meaningfully, and with evidence, claim to have had impact. We wanted to learn about intended and unintended consequences, as well as things that had been unsuccessful or where no change had occurred. In some cases there was not strong enough evidence to demonstrate an outcome across any of the stories and so we did not include that outcome in the model. In some cases we discovered other outcomes that we’d not necessarily been aware of. Through this process we were able to refine the model based on evidence.

This process has confirmed for us some of the things we hoped were working, strengthened and focused us on the potential and importance of some areas, and directed us to have greater efforts in others. Our focus for 2017 is to thicken the evidence base for the model and to better understand our reach (how consistent are these outcomes across the board).

Developing an Impact Model

The key steps we took in developing the model are summarised below. We plan to share a more in-depth version of this soon for those interested in similar approaches.

Step 1.

Gather together as much evidence of outcomes and change (connected to Lifehack interventions) as possible from existing materials. We looked at past evaluations, online discussion with past participants, ad hoc feedback received, evidence of changes to programmes, participant posted videos, blog posts, media reports and so on.

Step 2.

Develop a set of change-focused interview questions what would help identify where and how change had happened in the work practices of Lifehack partners, whether these changes had in turn impacted their approach to working with young people (e.g, increased commitment and capacity for co-design), and what role (if any) Lifehack interventions had played in this.

Step 3.

Identify a number of people/groups we could speak with who had been through Lifehack programmes. Conduct interviews with people and where possible gather additional levels of evidence (e.g.,feedback or evaluation from young people they worked alongside, reflections from colleagues or others that reinforced or verified findings, investment or support they’d been able to access as a result etc).

Step 4.

Conduct a literature review of key texts and existing evidence relating to:

-

- Protective factors and risk factors for youth mental health and wellbeing and positive youth development (national and international)

- Significant proven behaviour change literature and models

- Significant literature and research related to the YMH project and related policies within MSD, MOH and MYD

Step 5.

Develop draft Impact Stories: structured data driven stories that show what change has occurred, Lifehack’s role in that change and what kind of outcomes had been activated, and confirm these with participants.

Step 6.

Use a standard model to make explicit elements of Lifehack’s approach including vantage point to drive change, intervention types, who we work with, outputs and outcomes and develop a consistent language across material collected, including categories and lists that would allow us to code things more consistently (e.g., risk factors and our partners capacities for influence)

Step 7.

Confirm outcome categories on the basis of the data and strength of evidence in the stories, and refined categories across all sections of the model.

We have a model, what next?

We now have a consistent set of outcomes and key groups that we work with in the design and evaluation of all of our programmes. We also have a consistent set of questions we can ask at different points in our programme to help guide who we work with and how well the initiative is meeting needs and a standard structure for developing impact stories.

We are continuing to develop and evolve the toolset and outcomes and their definitions/examples as we continue to gather data and evidence. We are working to enhance the evaluation process so that it is more embedded into the programme, and is more accessible to different types of people including young people. The collection of data driven impact stories will also be ongoing. We are also finding different ways to share what we’ve learnt about how we can track impact in this kind of context.

Thanks again to Geoff Stone, and to all of those who participated the development of the model. Thanks also to all our partners who continue to work towards the goal of all young people flourishing in Aotearoa New Zealand !